Compliance and regulations are one way to achieve IT security. If one looks at industries that have been around for a very long time, and have very high stakes, for example commercial airline travel, mining, oil&gas, etc., one can find compliance and regulations everywhere. It’s how safety is managed in these environments. I have always been fascinated with safety incidents and read a lot of reports around them — these are almost always free to read and very detailed, unlike IT security incident reports. See for example the now very famous Challenger Accident Report (“For a successful technology, reality must take precedence over public relations, for nature cannot be fooled.”) or the similarly famous, and more recent AF-447 accident report. These are fascinating reads and if you are willing to read between the lines, they all talk about systems issues — not a single person making a single mistake.

Continue reading

Category Archives: security testing

Testing and pentesting, a road to effectiveness

I have been involved in computer security and security testing for a while and I think it’s time to talk about some aspects of it that get ignored, mostly for the worse. Let me just get this out of the way: security testing (or pentesting, if you like) and testing are very closely related.

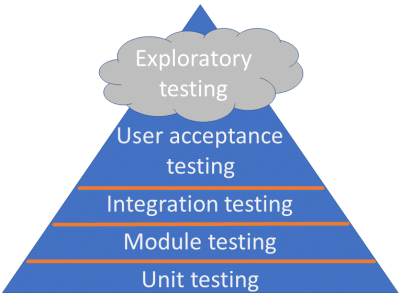

The Testing Pyramid

What’s really good about security testing being so close to testing is that you can apply the standard, well-know and widely used techniques from testing to the relatively new field of security testing. First of all, this chart:

Continue reading