Variable renumbering in SAT solvers keeps a mapping between the external variable numbers that is visible to the users and the internal variable numbers that is visible the to the system. The trivial mapping that most SAT solvers use is the one-to-one mapping where there is no difference between outer and internal variables. A smart mapping doesn’t keep track of all data related to variables that have been set or eliminated internally, so the internal datastructures can be smaller.

Advantages

Having smaller internal data structures help in achieving a lower memory footprint and better cache usage.

The memory savings are useful because some CNFs have tens of millions of variables. If the solver uses the typical watched literal scheme, it needs 2 arrays for each variable. If we are using 64b pointers and 32b array sizes, it’s 32B for each variable, so 32MB for every million variable only to keep the watching literal array(!). I have seen people complaining that their 100M variable problem runs out of memory — if we count that right that’s 3.2GB of memory only to hold the watching literal array pointers and sizes, not any data at all.

As for the CPU cache benefits: modern CPUs work using cache lines which are e.g. 64B long on Intel Sandy Bridge. If half of the variables are set already, the array holding the variable values — which will be accessed non-stop during propagation — will contain 50% useless data. In practice the speedup achieved can be upwards of 10%.

The simple problems

One problem with having a renumbering scheme is that you need to keep track of which datastructure is numbered in which way. The easy solution is to renumber absolutely everything. This is costly, however, as the mapping has to change every once in a while when new variables have been set. In this case, if everything is renumbered, then the eliminated variables‘ data needs to be updated according to the new mapping every time. This might be quite significant. So, it’s best not to renumber that. Similarly, if disconnected component analysis is used, then the disconnected components’ saved solutions need to be renumbered as well, along with the clauses that have been moved to the components.

An approach I have found to be satisfying is to keep every dynamic datastructure such as variable states (eliminated/decomposed/etc.), variable values (True/False/Unknown), clauses’ literals, etc. renumbered, while keeping mostly static datastructures such as eliminated clauses or equivalent literal maps non-renumbered. This works very well in practice as it allows the main system to shuffle the mapping around while not caring about all the other systems’ data.

The hard problem

The above is all fine and dandy until bounded variable addition (BVA) comes to the scene. This technique adds new variables to the problem to simplify it. These new variables will look like new outer variables, which seems good at first sight: the system could simply print the solution to all variables except the last N that were added by BVA and are not part of the original problem. However, if the caller adds new variables after the call to solve(), what can we do? The actual variables by the caller and the BVA variables will be mixed up: start with a bunch of original variables, sprinkle the end with some BVA, then some original variables, then some BVA…

The trivial solution to this is to have another mapping, one that translates variable numbers between the BVA and non-BVA system. As you might imagine, this complicates everything. Another solution is to forcibly eliminate all BVA variables after the call to solve(), let the user add the new clauses, and perform BVA again. Another even more complicated solution is to keep track of the variables being added, then re-number all variables inside all datastructures to move all BVA variables to the end of the variable array. This is expensive but only needs to be done once after the call to solve(), which may be acceptable. Currently, CryptoMiniSat uses the trivial scheme. Maybe I’ll move to the last (and most complicated) system later on.

Conclusion

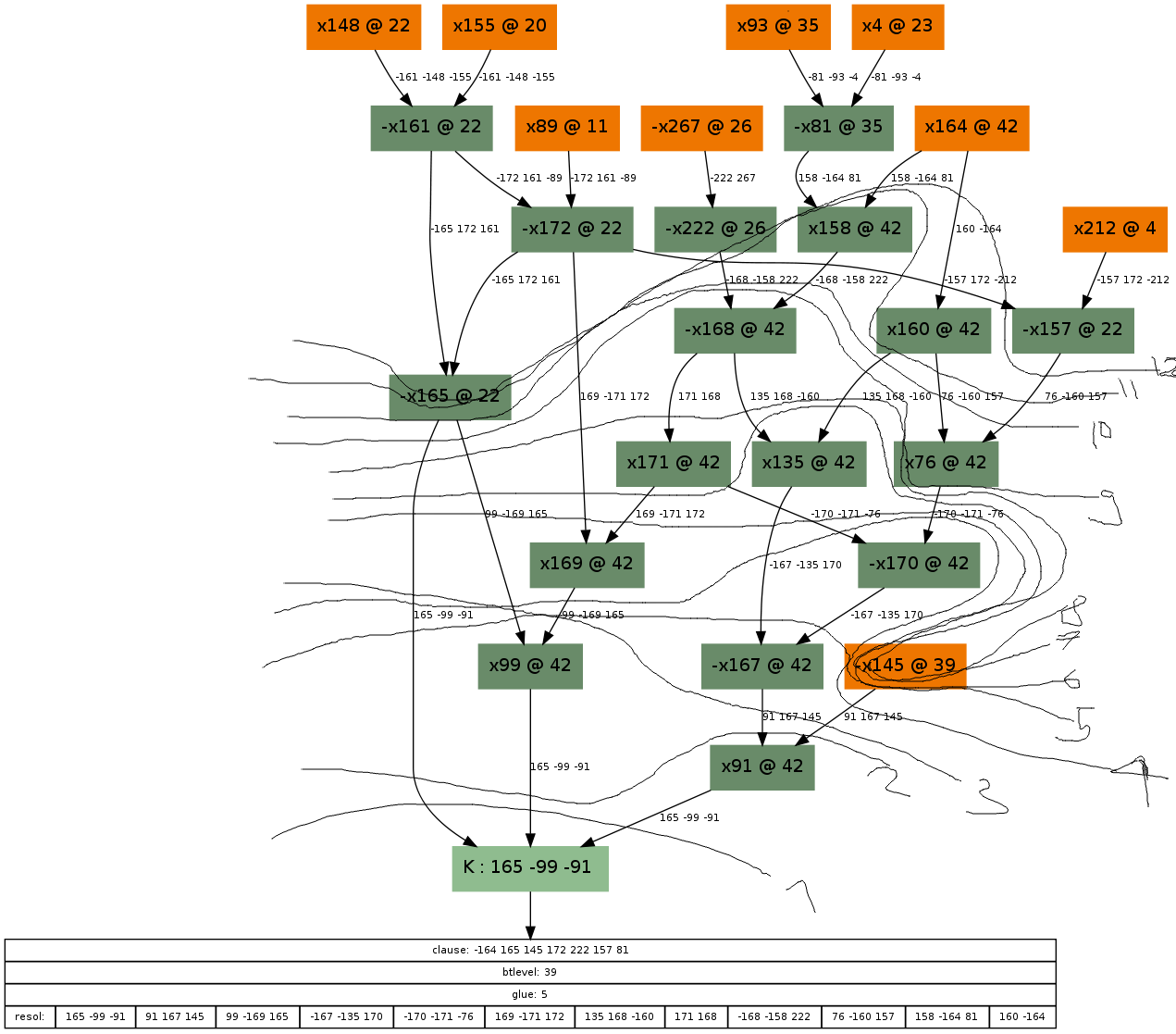

Variable renumbering is not for the faint of heart. Bugs become significantly harder to track, as all debug messages need to be translated to a common variable numbering or they make no sense at all. It’s also very easy to introduce bugs through variable renumbering. A truly difficult bug I had was when the disconnected component finder’s sub-solver renumbered its internal variables and when I tried to import some values from the sub-solver back to the main solver, I used the wrong variable numbers.