Approximate model counting allows to count the number of solutions (or “models”) to propositional satisfiability problems. This problem seems trivial at first given a propositional solver that can find a single solution: find one solution, ban it, ask for another one, ban it, etc. until all solutions are counted. The issue is that sometimes, the number of solutions is 2^50 and so counting this way is too slow. There are about 2^266 atoms in the universe, so counting anywhere near that is impossible using this method.

Exact Counting

Since we cannot count 1-by-1, we are then left with trying to count in some smarter way. There are a bunch of methods to count exactly, the simplest is to cut the problem on a variable, count when the variable is True, count when the variable is False, recursively, and add them all up. Given caching of components that recur while “cutting” away, this can be quite successful, as implemented by sharpSAT (see Marc Thurley’s paper).

These counters can scale quite well, but have some downsides. In particular, when the memory runs out, the cache needs to be groomed, sometimes resorting back to 1-by-1 counting, which we know will fail as there is no way 2^200 can be counted 1-by-1 in any reasonable amount of time. The caching systems used are smart, though, retaining last-used entries when the cache needs to be groomed. However, sometimes this grooming algorithm can lead to cyclic behaviour that effectively simulates 1-by-1 counting.

Approximate Counting

What Chakraborty, Meel and Vardi did in their paper, was to create a counter that counts not exactly, but “probably approximately correctly”. This jumble of terms basically means: there is a certain probability that the counting is correct, within a given threshold. We can both improve the probability and the threshold given more CPU time spent. In practical terms, the probability can be set to be over 99.99% and the threshold can be set to be under 20%, still beating exact counters. Note that 20% is not so much, as e.g. 2^60*1.2 = 2^(60.3).

So what’s the trick? How can we approximately count and give guarantees about the count? The trick is in fact quite simple. Let’s say you have to count balls, and there are thousands of them. One way to do it is to count 1-by-1. But, if you have a machine that can approximately half the number of balls you have, it can be done a lot faster: you half the balls, then check if you have at least 5 doing 1-by-1 counting. If you do, you half them again, and check if you have at least 5, etc. Eventually, let’s say you halved it 11 times and now you are left with 3 balls. So approximately, you must have had 3*2^11 = 6144 balls to begin with. In the end you had to execute the 1-by-1 count only 11*5+3+1 =59 times — a lot less than 6144! This is the idea used by ApproxMC.

Approximately Halving Using XORs

The “approximate halving” function used by ApproxMC is the plain XOR function, populated with variables picked with 50% probability. So for example if we have variables v1…v10, we pick each variable with 50% probability and add them into the same XOR. Let’s say we picked v1,v2,v5 and v8. The XOR would then be: v1⊕v2⊕v5⊕v8=1. This XOR is satisfied if an odd number of variables from the set {v1,v2,v5,v8} are 1. This intuitively forbids about half the solutions. The “intuitively” part of course is not enough, and if you read the original paper you will find the rigorous mathematical proof for the approximate halving of solutions this XOR function provides.

OK, so all we need to do is add these XORs to our original problem and we are done! This sounds easy, but there is a small hurdle and there is a big hurdle associated with this.

The small hurdle is that the original problem is a CNF, i.e. a conjunction of disjunctions, looking like “(v1 OR v2) AND (v2 OR not v3 OR not v4) AND…”. The XOR obviously does not look like this. The straightforward translation of XOR into CNF is exponential, so we need to add some variables to cut them smaller. It’s not that hard to figure this out and eventually add all the XORs into the CNF.

XORs, CDCL, and Gauss-Jordan Elimination

The larger hurdle is that once the XORs are in the CNF using the translation, the CNF becomes exponentially hard to solve using standard CDCL as used in most SAT solvers. This is because Gauss-Jordan elimination is exponentially hard for the standard CDCL to perform — but we need Gauss-Jordan elimination because the XORs will interact with each other, as we expect there to be many of them. Without being able to resolve the XORs with each other efficiently and derive information from them, it will be practically impossible to solve the CNF.

The solution is to tightly integrate Gauss-Jordan elimination into the solving process. CryptoMiniSat was the first solver to do this tight integration (albeit only for Gaussian elimination, which is sufficient). Other solvers have followed, in particular the work by Tero Laitinen and work by Cheng-Shen Han and Jie-Hong Roland Jiang. CryptoMiniSat currently uses the code by Han and Jiang after some cleanup and updates.

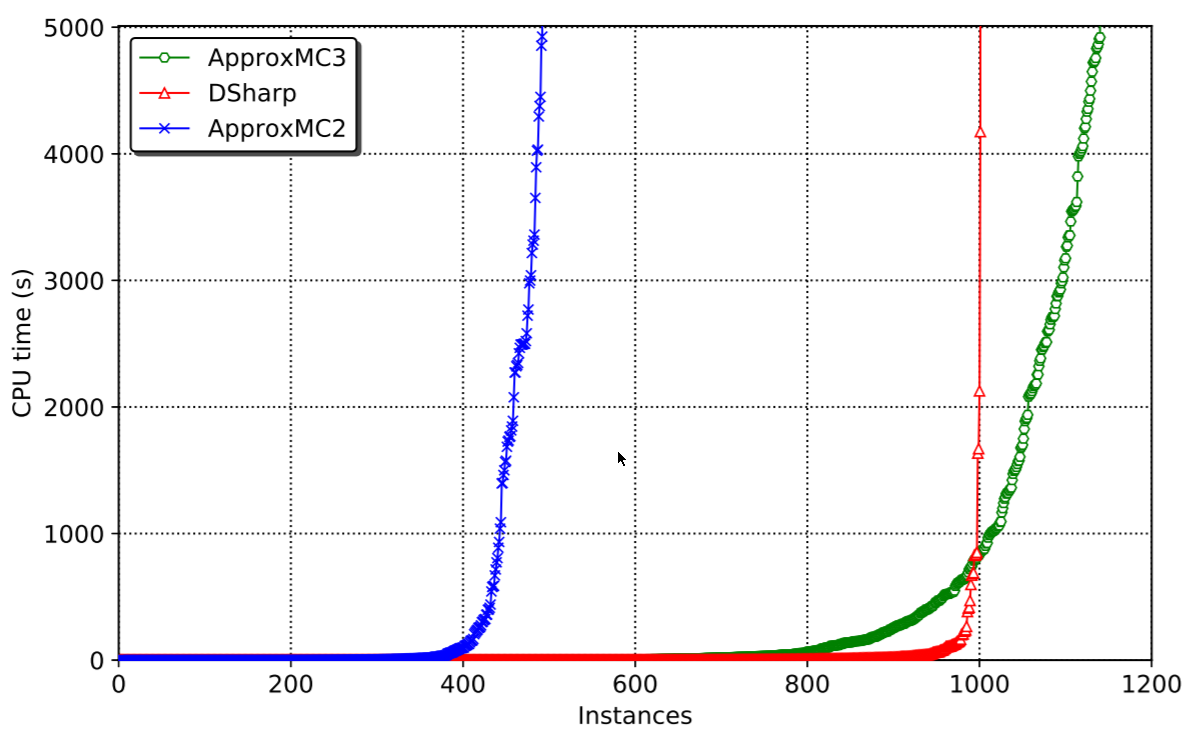

The latest work on ApproxMC and CryptoMiniSat has added one more thing besides the tight integration of the CDCL cycle: it now allows in- and pre-processing to occur while the XORs are inside the system. This brought some serious speedups as pre- and inprocessing are important factors in SAT solving. In fact, all modern SAT solvers strongly depend on them being active and working.

Concluding Remarks

We have gone from what model counting is, through how approximate counting works from a high-level perspective, all the way to the detail of running such a system inside a modern SAT solver. In case you want to try it out, you can do it by downloading the pre-built binaries or building it from source.